“The first principle is that you must not fool yourself – and you are the easiest person to fool.”

– Richard Feynman

There are several Connecticut-based birthday party clowns selling retail investors and the Hedge Fund Hopeful crowd a market analytics and data subscription product via email. The clown car pulls up every morning with “actionable” “ideas” and “insights” for boys and girls of all ages to enjoy. But not all of the purveyors of these products are outwardly making the claim that their subscribers can use this “data” and “perspective” to beat the stock / bond /currency markets on a consistent basis. It’s mostly harmless fun.

One of them, however, is absolutely nudging people along into his funnel with this very conceit. He wears a blazer in YouTube interviews, so you know it’s all meant to be taken super seriously.

He is frequently saying that his “information” is what investors need in order to anticipate what’s going to happen in the future and prosper from it. I’m embarrassed for everyone involved – the subscribers (both amateurs and professionals) who should know better, the social media followers who have bought in to the bullshit, the man himself, who is a ridiculous person, etc.

He’s not registered with any regulatory bodies and has no dollars under management in any accounts that he oversees, so the risk to him in making claims like these is precisely zero.

Anyway, I was thinking about some of his ridiculousness this morning when I came across this great post about how having additional information does ratchet up our confidence while doing nothing for our actual abilities to guess at the future. Joe Wiggins at Behavioural Investment writes about a study that “tested the impact of additional information on an individual’s ability to predict the results of college football games and their confidence in doing so correctly.”

Here’s what happened:

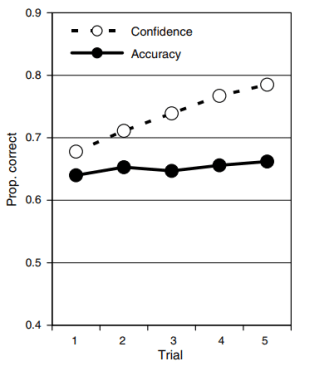

Participants in the study had to forecast a winner for a number of games based on anonymised statistical information. The information came in blocks of 6 (so for the first round of predictions the participant had 6 pieces of data) and after each round of predictions they were given another block of information, up to 5 blocks (or 30 data points), and had to update their views. Participants were asked to predict both the winner and their confidence in their judgement between 50% and 100%. The aim of the experiment was to understand how increased information impacted both accuracy and confidence. Here are the results (taken directly from the study):

Josh here – You can see that going from 6 blocks of data up to 30 blocks did almost nothing for the forecasters’ ability to pick college football winners, but being given all this additional information did boost their confidence. This is precisely what we find in market environments. My response to ultra-confident people sharing their investment outlooks is “You don’t even know what you don’t know.” I say this part to myself, it’s not the out loud part. My real response is to ask how they know for sure, and then I inwardly chuckle at whatever the answer is, it’s never not hilarious.

I respect people who talk in probabilities. I respect decision makers who speak with contingencies and caveats…If / Then, not Will or Shall. And I never get intimidated by someone who claims to be armed with analytics and stats and data to arrive at some certain outcome. Nor should you.

Now, one reply to this might be “But if you know what information to use, and are more precise in your utilization of it, it’s different.” Okay, I’m willing to entertain this possibility. Except, you’re going to need to convince me first that there is a way to determine, in advance and reliably, what information is right and useful, and what to leave out. Can you do it? Can you assure me that anyone else can?

Here’s Wiggins again:

An unpublished 1973 study by Paul Slovic, takes a similar approach but in this case with experienced horse race handicappers. Unlike in the college football study, the handicappers were allowed to rank the available information by perceived importance (from a list of 88 variables) and then had to predict the winner of an anonymised race when in possession of 5 pieces of information, then 10, 20 and 40 (by order of their specified preference / validity). The results obtained were consistent with the aforementioned football study – accuracy was consistent despite more information becoming available, but confidence increased as the number of available statistics rose.

So now you have professional, experienced people being able to select exactly which data points to prioritize – and the result is the same: Increase in confidence, no corresponding increase in accuracy of their picks.

You can’t actually believe that handicapping horse races is materially different than handicapping the investment and currency markets, can you? You need to get the fundamentals right and then you have to be able to nail what expectations are being priced in by the other bettors. You simply cannot reliably do this, I don’t care if you bury yourself up to your neck in analytics and insights.

There’s nothing wrong with being entertained by information, and to enjoy making and receiving predictions based on it. If we have to make decisions about the future, some information is better than no information. But the extrapolation – that even more information would be better than just some, and having the most information would be better than even more, and so on – it simply doesn’t work this way.

Analytics are entertainment. Those who provide analytics while taking themselves very seriously have reached the epitome of market clownsmanship. Which, I suppose, is just another form of entertainment unto itself.

Source:

Can More Information Lead to Worse Investment Decisions? (Behavioural Investment)

… [Trackback]

[…] Read More on that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Find More to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Read More here on that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Info to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Information to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Find More Information here to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Information to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]

… [Trackback]

[…] Information to that Topic: thereformedbroker.com/2019/01/09/delusions/ […]